The most dangerous AI decision a leader can make is not the wrong bet. It is making no conscious bet at all — and letting default behavior fill the void.

Most organizations already have an AI strategy. They just did not choose it. It emerged from competitive pressure, inertia, and the cumulative weight of decisions that were never framed as strategic. The result is not chaos — it is something more insidious: a coherent posture that no one consciously designed and that is now surprisingly difficult to change.

The evidence is everywhere. Across industries, individual productivity is rising — employees use AI to finish work faster, reclaim time, and quietly outperform their measured targets. Yet organizational output is flat. This is not a technology failure. It is a strategy failure: the predictable consequence of an accidental bet on individual enablement that no one consciously made. Some organizations celebrate pockets of AI-driven value — a champion here, a successful pilot there — without asking why those wins haven’t spread. The answer is almost always the same: the underlying bet was never named, so it could never be replicated.

Another pattern is equally telling. Some organizations celebrate pockets of AI-driven value — a champion here, a successful pilot there — without asking why those wins haven’t spread. The answer is almost always the same: the underlying bet was never named, so it could never be replicated.

In short, we are not short of activity. We are short of one thing: intentionality.

Yet beneath this confusion, a pattern is emerging. Organizations are not just at different levels of maturity. They are locked into fundamentally different postures — most of them inherited rather than chosen.

Some of these accidental bets will prove wise. Most will not. And within each category, the difference between organizations that thrive and those that don’t will have less to do with which bet they landed on and more to do with whether they ever realized they had made one.

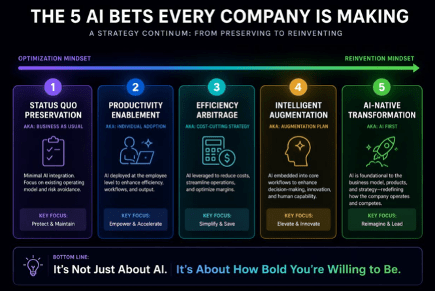

The big job for leaders is to choose the right stance on AI for their organizations — to make an intentional bet. Below, we name the five bets organizations are already making — so that leaders can finally choose, rather than simply inherit.

The Five Accidental Bets

The important point before reading these is this: you are already in one of them. The question is whether you got there on purpose.

Bet 1: Status Quo Preservation. The first bet is business as usual — in other words, to avoid doing anything. This is rarer than it sounds, but more common than executives admit. Some companies, particularly long-standing private firms with a healthy skepticism of hype, are largely indifferent to AI. They have seen waves come and go — from blockchain to the metaverse — and have concluded that ignoring the noise is often a rational strategy. This stance is easy to mock in an age of AI-induced FOMO. It is also not obviously wrong. If AI fails to deliver meaningful value at scale, or if the economics disappoint, restraint may look like foresight rather than complacency.

Bet 2: Individual Productivity Enablement. The second bet is about how individuals increase their productivity. These organizations see the gains in specific tasks, but see the AI revolution as an extension of technology revolution more broadly — making some tasks quicker and easier for the workers who already do them. Adoption metrics are tracked with enthusiasm. Usage is sometimes mandated, occasionally enforced with the corporate equivalent of public shaming (publishing usage rankings, naming and shaming, and so on). The stated goal is not to be left behind. But without a conscious choice about what “ahead” actually means, these organizations are simply accelerating in an unexamined direction. This is the bet most commonly made by accident — and the one most likely to produce the smuggling dynamic described above.

Bet 3: Efficiency Arbitrage. The third bet is the cost-cutting strategy. This is adoption with sharper intent and shorter horizons. AI is used to automate tasks, reduce headcount, and deliver immediate efficiency gains. Entry-level roles are the most obvious targets. The logic is compelling, especially under competitive pressure. If peers promise 10% efficiency gains, it is tempting to promise 12%. The risk is equally obvious. Organizations may improve margins today while undermining their talent pipelines tomorrow. After all, if AI does the grunt work, who learns the craft?

Bet 4: Intelligent Augmentation. The fourth bet is the augmentation play. This is more ambitious. Instead of asking what AI can replace, leaders ask what AI can do to enhance their competitive edge and better serve their customers. Work is redesigned so that humans and machines complement each other. Routine tasks are automated, but human judgment, creativity, and social intelligence are amplified. This often involves a conscious investment in talent and culture. Employees are not just expected to use AI but to think differently. Culture, in this model, becomes a competitive advantage rather than an afterthought.

Bet 5: AI Native Transformation. The fifth bet is AI first. Here, organizations assume that AI will continue to improve rapidly and structure themselves accordingly. Human involvement is minimized wherever possible. Processes, roles, and even business models are rebuilt around automation. At the extreme, this includes the much-discussed “one-person unicorn,” where a single individual, augmented by AI, can build and scale what previously required entire teams. This is the Shenzhen model, where speed, iteration, and technological leverage trump tradition, and discussions about eliminating jobs or augmenting human potential are less central than humanoid robots. It is bold, occasionally effective, and heavily dependent on continued advances in AI.

Your Industry Has Already Made Your Bet for You

The accidental bet is not random. Industry structure, competitive economics, regulatory environment, and talent culture all exert gravitational pull toward a default posture. Understanding that pull is the first step to deciding whether to go with it or against it.

- Legal and professional services: Billable-hour economics, liability exposure, and a deeply conservative partnership model pull almost inevitably toward Bet 2 or Bet 3. Efficiency gains are visible and defensible; transformation feels risky and culturally foreign.

- Software and technology: Iteration culture, abundant technical talent, and existential competitive pressure from AI-native startups make Bet 5 the default gravitational field — often before leaders have consciously evaluated whether it is right for their specific organization.

- Financial services: Regulatory scrutiny and data sensitivity create structural friction against full automation, producing a default cluster around Bet 3 with Bet 4 as an aspiration that is perpetually deferred.

- Healthcare: Safety culture and compliance burden create institutional conservatism that anchors most organizations between Bet 1 and Bet 2, regardless of what their innovation teams are piloting.

Knowing your industry’s default bet does not mean accepting it. It means you can make a genuinely informed choice about whether to follow the current, resist it, or move faster than peers who are still being carried by it without noticing.

What Determines Success Within Each Bet

Choosing a bet is only the beginning. Success depends on how well that bet is executed across five dimensions. One of the paradoxes of AI is that to adopt it, one still needs to go through the messy leadership and transformation journey.

The first is strategy and vision. Do you have a clear understanding of what AI can realistically do for your business today? Not in theory, but in practice. Have you identified where it enhances or changes your value proposition, your cost structure, or your competitive positioning? Vague enthusiasm is not a strategy.

The second is the target operating model. How does work actually get done? Which processes are automated, which are augmented, and which remain human-led? Many organizations adopt AI tools without redesigning workflows, and then wonder why little changes. Technology rarely fixes a flawed process. It usually amplifies it.

The third is talent. What skills do you need in an AI-enabled organization? How do you acquire them, and just as importantly, how do you develop them internally? Which roles or parts of roles become more important, not less? The uncomfortable reality is that AI raises the bar for human contribution. Knowing more is less valuable when answers are abundant. Knowing what to do with those answers becomes critical. Ultimately, knowing how to integrate and leverage the best of human capabilities with the opportunities that the technology offers will be the winning combination.

The fourth is leadership. Leaders must move from being sources of answers to curators of judgment. This includes asking better questions, evaluating AI-generated outputs, making decisions under conditions of synthetic certainty, and creating an environment that enhances learning from failures. The polite term for AI errors is “hallucination.” The less polite term is nonsense. Distinguishing between the two is rapidly becoming a core leadership skill.

The fifth — and often underestimated — is culture. How does your organization respond to AI? With curiosity or fear? With experimentation or compliance? Culture determines whether AI is used thoughtfully or superficially. It also shapes whether employees see AI as a tool for growth or a threat to their relevance. In a world where technology is increasingly accessible, culture may be the most durable source of advantage.

Betting, Measuring, and Pivoting

One of the complications of AI strategy is that the target is moving. Capabilities improve, costs fall, and new use cases emerge at a pace that makes long-term planning uncomfortable.

This makes it tempting to avoid commitment altogether. That is a mistake.

The alternative is to make a clear bet, define what success looks like, and measure it rigorously — not just adoption rates, but impact on performance, quality, and outcomes. And then, crucially, to be willing to pivot. The best organizations treat their AI strategy not as a fixed plan, but as a series of informed experiments.

This requires a degree of intellectual humility. It also requires discipline. Pivoting is not the same as drifting.

The Only Question Worth Asking

Previous technological disruptions rewarded integration over speed — and the same logic applies here, at greater scale and velocity.

In the long run, successful organizations will not be those that simply “use AI.” They will be those that looked honestly at the bet they had already made — and decided, deliberately, whether to keep it.

The five bets are not a menu. They are a mirror. Most organizations will recognize themselves in one of them — not because they chose it, but because gravity, competitive pressure, and inertia chose it for them. The only question worth asking is whether you got lucky.

The opinions expressed in Fortune.com commentary pieces are solely the views of their authors and do not necessarily reflect the opinions and beliefs of Fortune.

This story was originally featured on Fortune.com